1. Introduction: The Industry’s Favorite Architecture Lie

Event-Driven Architecture (EDA) has quietly become the default answer to modern system design.

- Microservices? → Use events

- Scalability issues? → Use events

- Decoupling problems? → Use events

But here’s the uncomfortable truth:

Most teams adopt event-driven architecture before they understand distributed systems.

And that’s where things go wrong.

Because EDA doesn’t just change how services communicate—it fundamentally changes:

- How failures behave

- How systems evolve

- How engineers think

This is not an architectural style.

This is a system behavior transformation.

2. What Event-Driven Architecture Really Is

Let’s strip away the hype.

EDA is not Kafka.

EDA is not async messaging.

EDA is not microservices.

EDA is this:

A system where state changes are communicated indirectly via events instead of direct control flow.

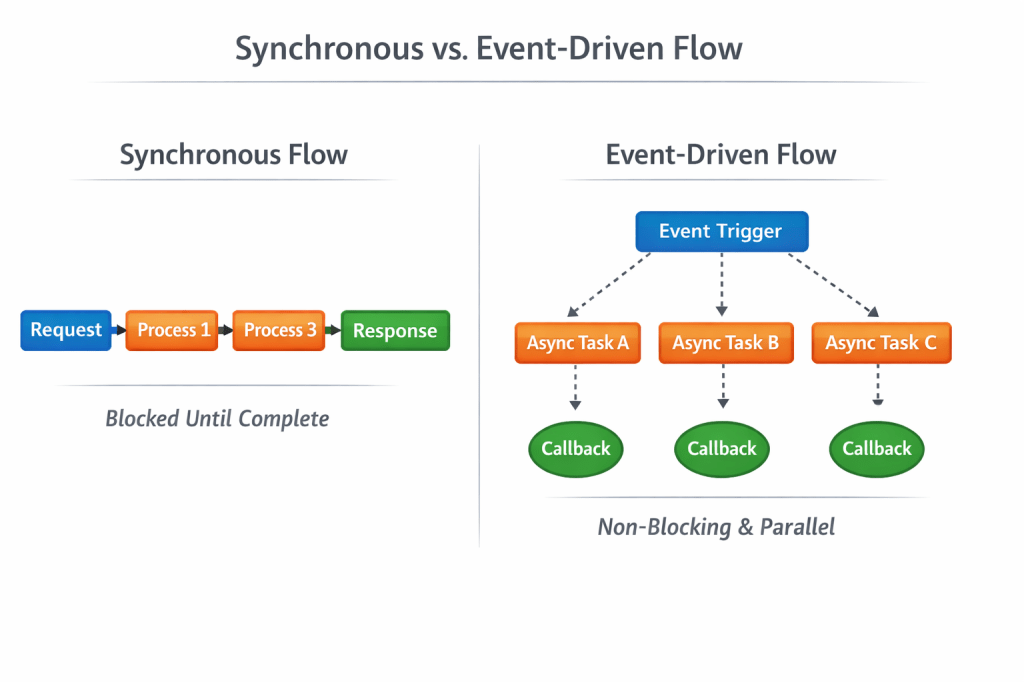

Diagram 1

Key Insight:

- Synchronous = predictable flow

- Event-driven = emergent behavior

That distinction is everything.

3. The Illusion of Decoupling

EDA is often sold as “loosely coupled.”

That’s partially true—but dangerously misleading.

What actually happens:

You remove structural coupling

…but introduce temporal + behavioral coupling

Example

Service A emits:

{ "orderId": "123", "status": "CREATED"}

Now:

- Service B depends on it

- Service C depends on it

- Service D depends on it

But A has no idea.

You didn’t remove coupling. You hid it.

4. The First Real Cost: Loss of Control

In a traditional system:

A → B → C

You know:

- What runs

- When it runs

- What fails

In EDA:

A → Event → Unknown chain of reactions

You don’t know:

- Who consumes the event

- In what order

- Whether the flow completes

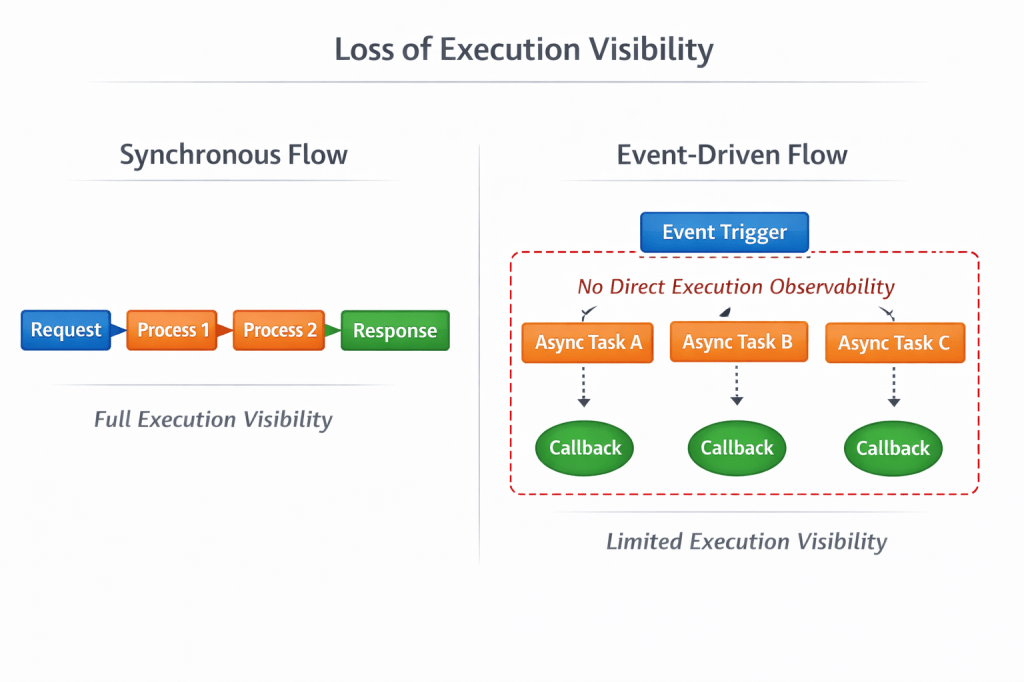

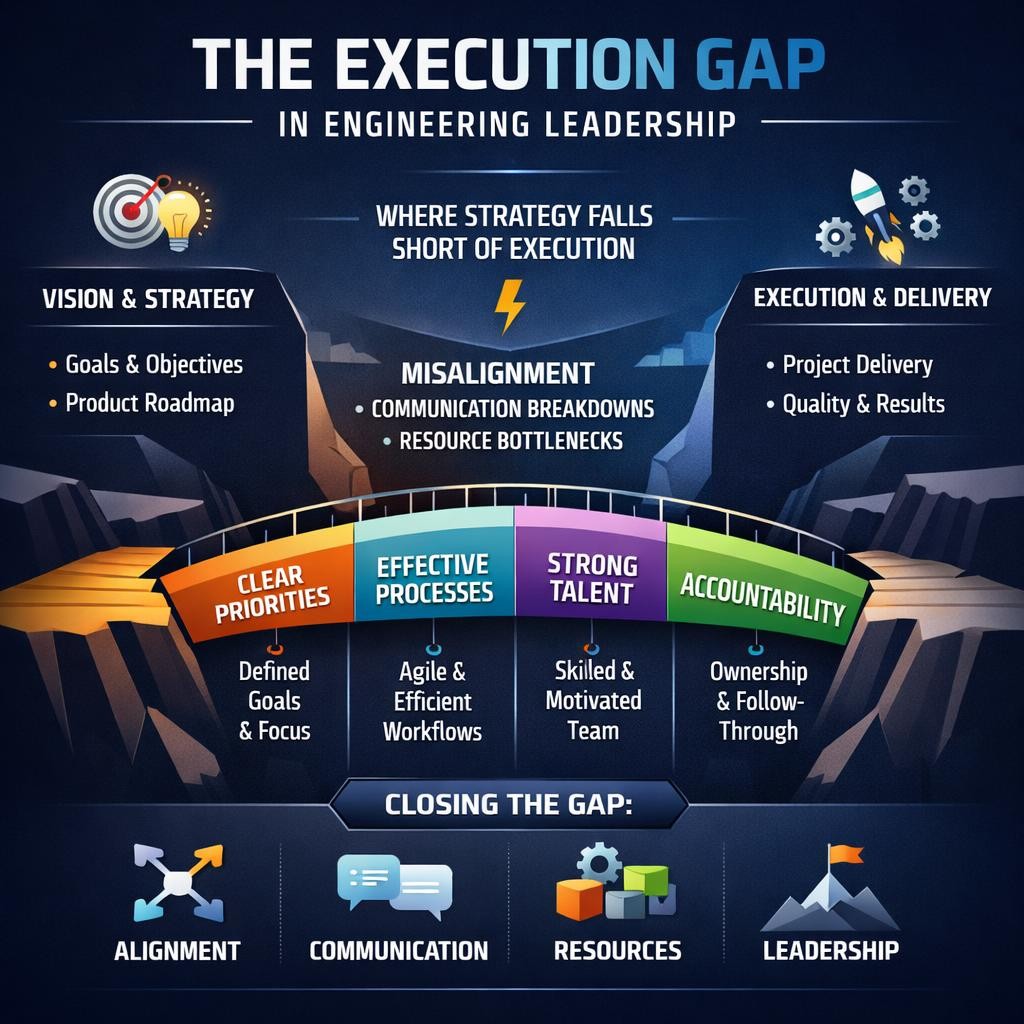

Diagram 2

The gap:

What you think happens vs what actually happens diverges over time.

5. Eventual Consistency: The Silent Killer

EDA systems are almost always:

Eventually consistent

That sounds harmless—until you attach business requirements.

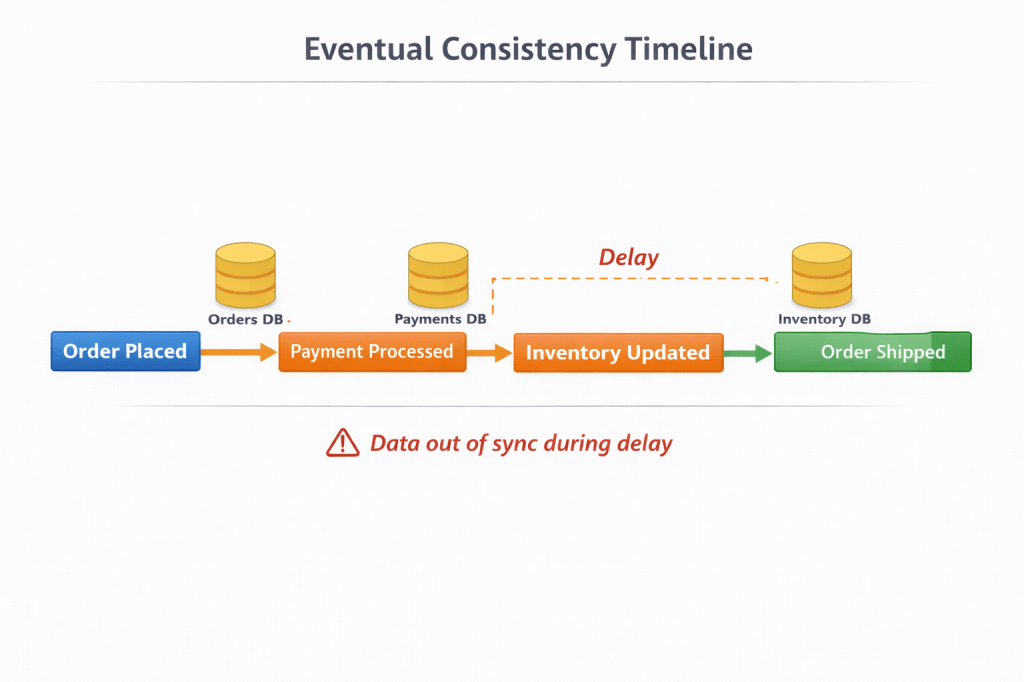

Diagram 3

What this forces you to build:

- Retry mechanisms

- Idempotency layers

- Compensation logic (Saga pattern)

- Dead-letter queues

At this point:

You are implementing a distributed transaction system manually

6. Debugging: Where Systems Go to Die

In a monolith:

Error → Stack trace → Fix

In EDA:

Error → Logs → More logs → Guessing → More guessing

Why debugging fails:

- No single execution path

- No central control flow

- Asynchronous timing issues

- Partial failures everywhere

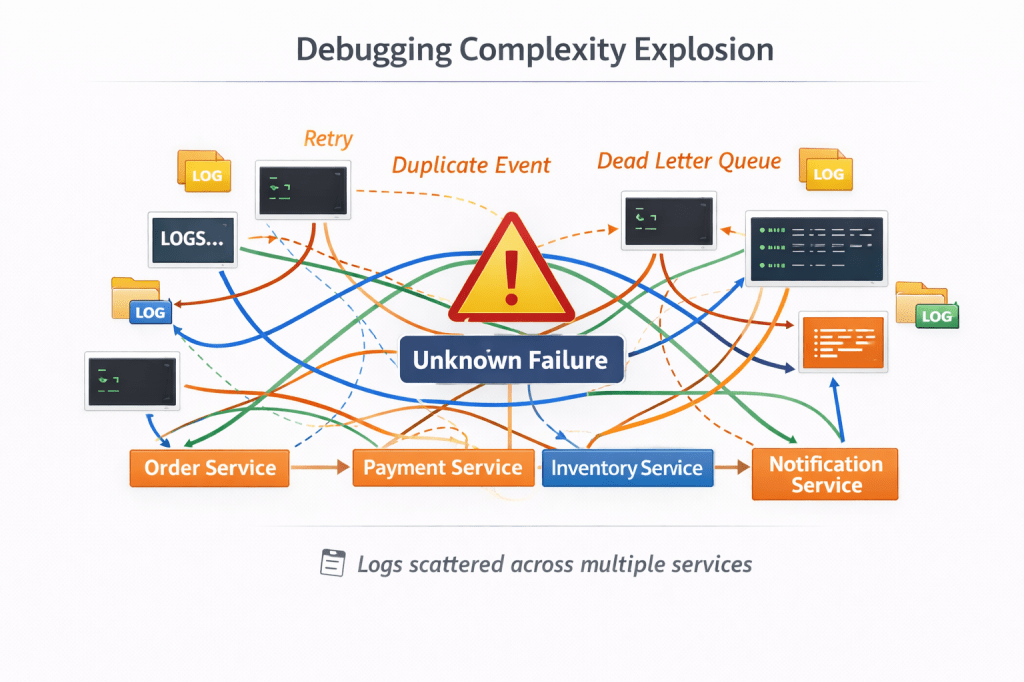

Diagram 4

Required (Non-Optional) Tools

If you use EDA, you MUST have:

- Distributed tracing (e.g., OpenTelemetry)

- Correlation IDs

- Centralized logging

- Event replay capability

Without these:

Your system is effectively undebuggable at scale

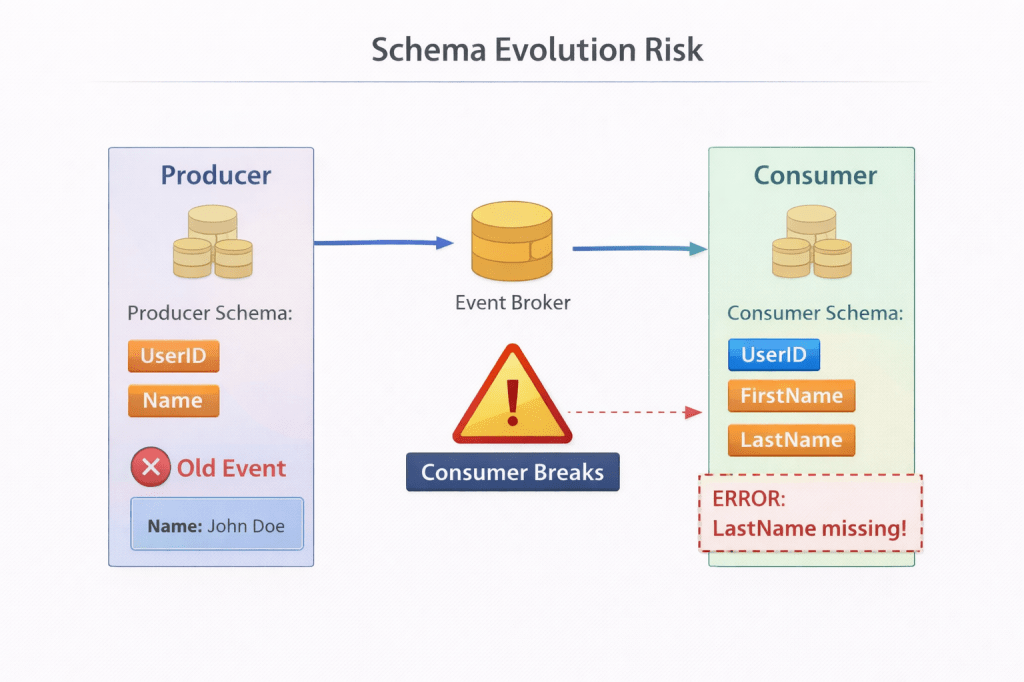

7. Data Contracts: The Hidden Time Bomb

Events are not just messages.

They are:

Immutable contracts across time

The problem:

You deploy Service A v2:

{ "orderId": "123", "status": "CREATED", "currency": "USD"}

But Service B still expects:

{ "orderId": "123", "status": "CREATED"}

What breaks:

- Consumers crash

- Silent data corruption

- Partial processing

Diagram 5

Required solutions:

- Schema registry

- Backward compatibility rules

- Versioning strategy

This is:

API versioning × distributed systems × time

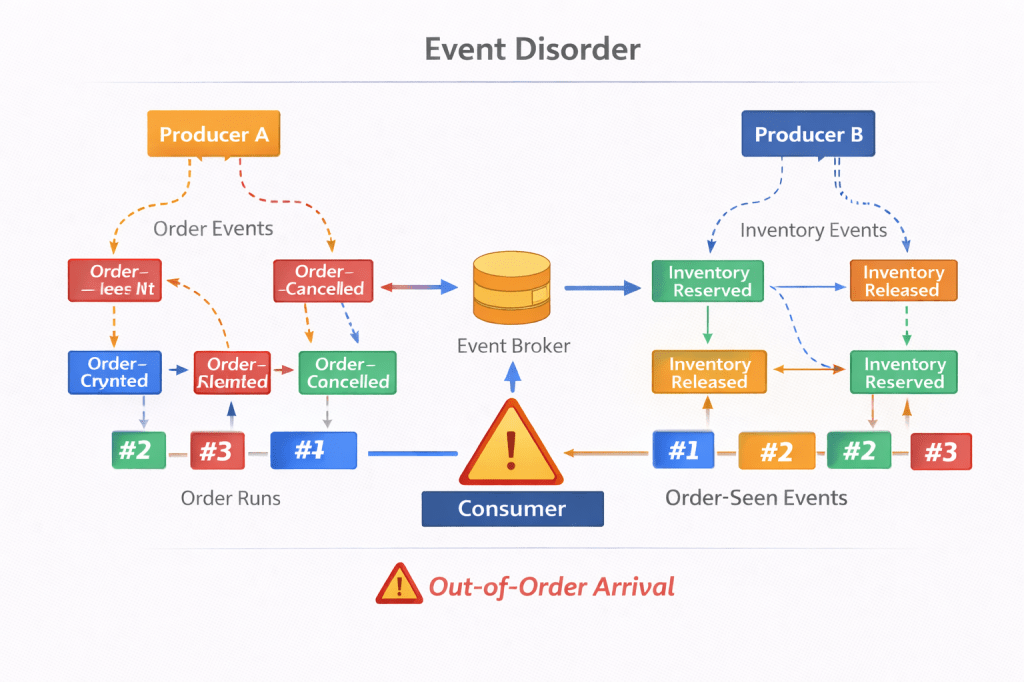

8. Duplicate and Out-of-Order Events

This is not a bug.

This is guaranteed behavior.

You WILL see:

- Duplicate events

- Out-of-order delivery

- Partial processing

Diagram 6

What you must implement:

- Idempotency keys

- Deduplication logic

- Ordering constraints (if possible)

If you skip this:

You will corrupt your own system.

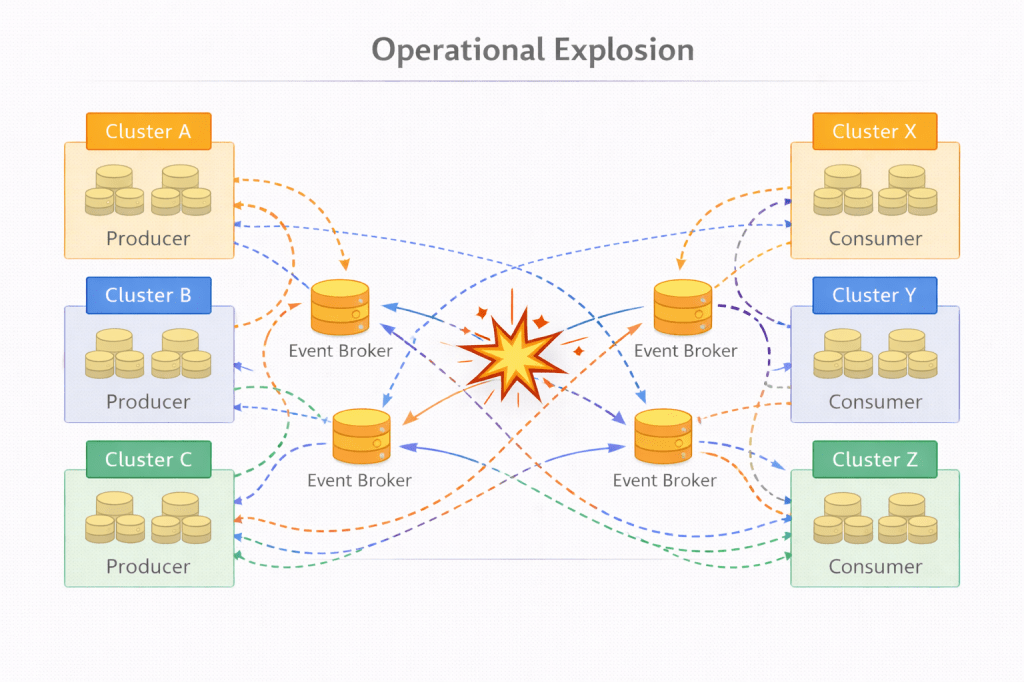

9. Operational Complexity: The Real Price

EDA turns your system into a platform.

Your architecture now includes:

- Message brokers (Kafka, RabbitMQ)

- Retry pipelines

- Dead-letter queues

- Monitoring systems

- Schema registry

- Event replay systems

Diagram 7

This is not “architecture elegance.”

This is operational overhead at scale

10. Cognitive Load: The Most Ignored Cost

This is where most systems fail—not technically, but organizationally.

Ask a developer:

“What happens when an order is placed?”

In synchronous systems:

Clear answer.

In EDA:

“It depends…”

- Which services are up

- Which events are delayed

- Which retries succeed

Diagram 8 — Cognitive Load Gap

TEAM UNDERSTANDINGSimple Flow → Order → Payment → Done----------------------------------------REAL SYSTEMEvents → Retries → Failures → Partial State → Recovery

If engineers can’t reason about the system, they can’t safely change it.

11. When NOT to Use Event-Driven Architecture

Let’s cut through the noise.

❌ 1. CRUD Applications

- Simple request-response

- Low complexity

→ EDA adds zero value

❌ 2. Strong Consistency Systems

- Banking

- Trading

- Critical workflows

→ You need correctness NOW, not eventually

❌ 3. Small Teams

EDA requires maturity:

- Observability

- Debugging discipline

- Operational ownership

❌ 4. Low Scale Systems

If you’re not dealing with:

- High throughput

- Async workloads

→ EDA is premature optimization

12. When EDA Actually Works

EDA shines in specific conditions.

✅ 1. High-Scale Systems

- Millions of events

- Parallel consumers

✅ 2. Decoupled Domains

Different teams, independent systems

✅ 3. Event Sourcing

- Audit trails

- Replayability

✅ 4. Real-Time Systems

- Streaming

- Notifications

- IoT

13. The Hybrid Architecture (The Real Answer)

The best systems are not pure EDA.

They are:

Hybrid systems with controlled complexity

Diagram 9 — Hybrid Architecture

CRITICAL PATH (SYNC)User → Order Service → Payment → Confirmation---------------------------------------------ASYNC SIDE EFFECTS (EVENTS)OrderPlaced Event → → Email Service → Analytics → Notification System

Why this works:

- Critical flow = reliable

- Side effects = scalable

14. Orchestration vs Choreography

This is where senior engineers separate from average ones.

Choreography (default EDA)

- Services react blindly

- No central control

→ Scales poorly in complexity

Orchestration (recommended)

- Central workflow controller

- Explicit flow definition

Diagram 10 — Orchestration vs Choreography

CHOREOGRAPHYEvent → Service A → Event → Service B → Event → Service C(No central control)----------------------------------------ORCHESTRATIONOrchestrator ↓Service A → Service B → Service C

If your workflows matter, don’t leave them to chaos.

15. A Practical Decision Framework

Before choosing EDA, ask:

1. Do you need async processing?

No → don’t use it

2. Can your system tolerate inconsistency?

No → avoid it

3. Do you have observability maturity?

No → you’re not ready

4. Is scale a real problem?

No → keep it simple

16. Final Truth

Event-Driven Architecture is powerful.

But here’s the reality:

It amplifies both good engineering and bad decisions

Used correctly:

- Scalable

- Flexible

- Resilient

Used blindly:

- Unpredictable

- Fragile

- Undebuggable

🔥 Closing Thought

“The goal is not to build modern systems.

The goal is to build systems your team can understand, debug, and evolve.”

Leave a comment